In the previous post on this topic, we discussed Dilworth’s theorem on chains and antichains in a general partially ordered set. In particular, whatever the size of the largest antichain in a poset, it is possible to partition the poset into exactly that many chains. So for various specific posets, or the directed acyclic graphs associated to them, we are interested in the size of this largest antichain.

The following example turned out to be more interesting than I’d expected. At a conventional modern maths olympiad, there are typically three questions on each paper, and for reasons lost in the mists of time, each student receives an integer score between 0 and 7 per question. A natural question to ask is “how many students need to sit a paper before it’s guaranteed that one will scores at least as highly as another on every question?” (I’m posing this as a straight combinatorial problem – the correlation between scores on different questions will be non-zero and presumably positive, but that is not relevant here.)

The set of outcomes is clearly  , with the usual weak domination partial order inherited from

, with the usual weak domination partial order inherited from  . Then an antichain corresponds to a set of triples of scores such that no triple dominates another triple. So the answer to the question posed is: “the size of the largest antichain in this poset, plus one.”

. Then an antichain corresponds to a set of triples of scores such that no triple dominates another triple. So the answer to the question posed is: “the size of the largest antichain in this poset, plus one.”

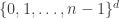

In general, we might ask about  , again with the weak domination ordering. This directed graph, which generalises the hypercube as well as our example, is called the grid.

, again with the weak domination ordering. This directed graph, which generalises the hypercube as well as our example, is called the grid.

Heuristics for the largest antichain

Retaining the language of test scores on multiple questions is helpful. In the previous post, we constructed a partition of the poset into antichains, indexed by the elements of some maximal chain, by starting with the sources, then looking at everything descended only from sources, and so on. (Recall that the statement that this is possible was referred to as the dual of Dilworth’s theorem.) In the grid, there’s a lot of symmetry (in particular under the mapping  in every coordinate), and so you end up with the same family of antichains whether you work upwards from the sources or downwards from the sinks. (Or vice versa depending on how you’ve oriented your diagram…) The layers of antichains also have a natural interpretation – each layer corresponds to a given total score. It’s clear a priori why each of these is an antichain. If A scores the same as B overall, but strictly more on the first question, this must be counterbalanced by a strictly lower score on another question.

in every coordinate), and so you end up with the same family of antichains whether you work upwards from the sources or downwards from the sinks. (Or vice versa depending on how you’ve oriented your diagram…) The layers of antichains also have a natural interpretation – each layer corresponds to a given total score. It’s clear a priori why each of these is an antichain. If A scores the same as B overall, but strictly more on the first question, this must be counterbalanced by a strictly lower score on another question.

So a natural guess for the largest antichain is the largest antichain corresponding to some fixed total score. Which total score should this be? It ought to be the middle layer, that is total score  , or the two values directly on either side if this isn’t an integer. My intuition was probabilistic. The uniform distribution on the grid is achieved by IID uniform distributions in each coordinate, which you can think of as a random walk, especially if you subtract off the mean first. It feels that any symmetric random walk should have mode zero or next-to-zero. Certainly this works asymptotically in a rescaled sense by CLT, and in a slightly stronger sense by local CLT, but we don’t really want asymptotics here.

, or the two values directly on either side if this isn’t an integer. My intuition was probabilistic. The uniform distribution on the grid is achieved by IID uniform distributions in each coordinate, which you can think of as a random walk, especially if you subtract off the mean first. It feels that any symmetric random walk should have mode zero or next-to-zero. Certainly this works asymptotically in a rescaled sense by CLT, and in a slightly stronger sense by local CLT, but we don’t really want asymptotics here.

When I started writing the previous paragraph, I assumed there would be a simple justification for the claim that the middle layer(s) was largest, whether by straight enumeration, or some combinatorial argument, or even generating functions. Perhaps there is, and I didn’t spot it. Induction on d definitely works though, with a slightly stronger hypothesis that the layer sizes are symmetric around the median, and monotone on either side of the median. The details are simple and not especially interesting, so I won’t go into them.

From now on, the hypothesis is that this middle layer of the grid is the largest antichain. Why shouldn’t it, for example, be some mixture of middle-ish layers? (*) Well, heuristically, any score sequence in one layer removes several possibilities from a directly adjacent layer, and it seems unlikely that this effect is going to cancel out if you take some intermediate number of score sequences in the first layer. Also, the layers get smaller as you go away from the middle, so because of the large amount of symmetry (coordinates are exchangeable etc), it feels reasonable that there should be surjections between layers in the outward direction from the middle. The union of all these surjections gives a decomposition into chains.

This result is in fact true, and its proof by Bollobas and Leader, using shadows and compression can be found in the very readable Sections 0 and 1 of [1].

Most of the key ideas to a compression argument are present in the case n=2, for which some notes by Leader can be found here, starting with Proof 1 of Theorem 3, the approach of which is developed over subsequent sections. We treat the case n=2, but focusing on a particularly slick approach that does not generalise as successfully. We also return to the original case d=3 without using anything especially exotic.

Largest antichain in the hypercube – Sperner’s Theorem

The hypercube  is the classical example. There is a natural correspondence between the vertices of the hypercube, and subsets of

is the classical example. There is a natural correspondence between the vertices of the hypercube, and subsets of ![[d]](https://s0.wp.com/latex.php?latex=%5Bd%5D&bg=ffffff&fg=333333&s=0&c=20201002) . The ordering on the hypercube corresponds to the ordering given by containment on

. The ordering on the hypercube corresponds to the ordering given by containment on ![\mathcal{P}([d])](https://s0.wp.com/latex.php?latex=%5Cmathcal%7BP%7D%28%5Bd%5D%29&bg=ffffff&fg=333333&s=0&c=20201002) . Almost by definition, the k-th layer corresponds to subsets of size k, and thus includes

. Almost by definition, the k-th layer corresponds to subsets of size k, and thus includes  subsets. The claim is that the size of the largest antichain is

subsets. The claim is that the size of the largest antichain is  , corresponding to the middle layer if d is even, and one of the two middle layers if d is odd. This result is true, and is called Sperner’s theorem.

, corresponding to the middle layer if d is even, and one of the two middle layers if d is odd. This result is true, and is called Sperner’s theorem.

I know a few proofs of this from the Combinatorics course I attended in my final year at Cambridge. As explained, I’m mostly going to ignore the arguments using compression and shadows, even though these generalise better.

As in the previous post, one approach is to exhibit a covering family of exactly this number of disjoint chains. Indeed, this can be done layer by layer, working outwards from the middle layer(s). The tool here is Hall’s Marriage Theorem, and we verify the relevant condition by double-counting. Probably the hardest case is demonstrating the existence of a matching between the middle pair of layers when d is odd.

Take d odd, and let  . Now consider any subset S of the d’-th layer

. Now consider any subset S of the d’-th layer ![\binom{[d]}{d'}](https://s0.wp.com/latex.php?latex=%5Cbinom%7B%5Bd%5D%7D%7Bd%27%7D&bg=ffffff&fg=333333&s=0&c=20201002) . We now let the upper shadow of S be

. We now let the upper shadow of S be

![\partial^+(S):= \{A\in \binom{[d]}{d'+1}\,:\, \exists B\in S, B\subset A\},](https://s0.wp.com/latex.php?latex=%5Cpartial%5E%2B%28S%29%3A%3D+%5C%7BA%5Cin+%5Cbinom%7B%5Bd%5D%7D%7Bd%27%2B1%7D%5C%2C%3A%5C%2C+%5Cexists+B%5Cin+S%2C+B%5Csubset+A%5C%7D%2C&bg=ffffff&fg=333333&s=0&c=20201002)

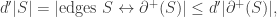

the sets in the (d’+1)-th layer which lie above some set in S. To apply Hall’s Marriage theorem, we have to show that  for all choice of S.

for all choice of S.

We double-count the number of edges in the hypercube from  to

to  . Firstly, for every element

. Firstly, for every element  , there are exactly d’ relevant edges. Secondly, for every element

, there are exactly d’ relevant edges. Secondly, for every element  , there are exactly d’ edges to some element of

, there are exactly d’ edges to some element of ![\binom{[d]}{d'}](https://s0.wp.com/latex.php?latex=%5Cbinom%7B%5Bd%5D%7D%7Bd%27%7D&bg=ffffff&fg=333333&s=0&c=20201002) , and so in particular there are at most d’ edges to elements of S. Thus

, and so in particular there are at most d’ edges to elements of S. Thus

which is exactly what we require for Hall’s MT. The argument for the matching between other layers is the same, with a bit more notation, but also more flexibility, since it isn’t a perfect matching.

The second proof looks at maximal chains. Recall, in this context, a maximal chain is a sequence  where each

where each ![B_k:= \binom{[d]}{k}](https://s0.wp.com/latex.php?latex=B_k%3A%3D+%5Cbinom%7B%5Bd%5D%7D%7Bk%7D&bg=ffffff&fg=333333&s=0&c=20201002) . We now consider some largest-possible antichain

. We now consider some largest-possible antichain  , and count how many maximal chains include an element

, and count how many maximal chains include an element  . If

. If  , it’s easy to convince yourself that there are

, it’s easy to convince yourself that there are  such maximal chains. However, given

such maximal chains. However, given  , the set of maximal chains containing A and the set of maximal chains containing A’ are disjoint, since

, the set of maximal chains containing A and the set of maximal chains containing A’ are disjoint, since  is an antichain. From this, we obtain

is an antichain. From this, we obtain

(**)

(**)

Normally after a change of notation, so that we are counting the size of the intersection of the antichain with each layer, this is called the LYM inequality after Lubell, Yamamoto and Meshalkin. The heuristic is that the sum of the proportions of layers taken up by the antichain is at most one. This is essentially the same as earlier at (*). This argument can also be phrased probabilistically, by choosing a *random* maximal chain, and considering the probability that it intersects the proposed largest antichain, which is, naturally, at most one. Of course, the content is the same as this deterministic combinatorial argument.

Either way, from (**), the statement of Sperner’s theorem follows rapidly, since we know that  for all A.

for all A.

Largest antichain in the general grid

Instead of attempting a proof or even a digest of the argument in the general case, I’ll give a brief outline of why the previous arguments don’t transfer immediately. It’s pretty much the same reason for both approaches. In the hypercube, there is a lot of symmetry within each layer. Indeed, almost by definition, any vertex in the k-th layer can be obtained from any other vertex in the k-th layer just by permuting the labels (or permuting the coordinates if thinking as a vector).

The hypercube ‘looks the same’ from every vertex, but that is not true of the grid. Consider for clarity the n=8, d=3 case we discussed right at the beginning, and compare the scores (7,0,0) and (2,2,3). The number of maximal chains through (7,0,0) is  , while the number of maximal chains through (2,2,3) is

, while the number of maximal chains through (2,2,3) is  , and the latter is a lot larger, which means any attempt to use the second argument is going to be tricky, or at least require an extra layer of detail. Indeed, exactly the same problem arises when we try and use Hall’s condition to construct the optimal chain covering directly. In the double-counting section, it’s a lot more complicated than just multiplying by d’, as was the case in the middle of the hypercube.

, and the latter is a lot larger, which means any attempt to use the second argument is going to be tricky, or at least require an extra layer of detail. Indeed, exactly the same problem arises when we try and use Hall’s condition to construct the optimal chain covering directly. In the double-counting section, it’s a lot more complicated than just multiplying by d’, as was the case in the middle of the hypercube.

Largest antichain in the d=3 grid

We can, however, do the d=3 case. As we will see, the main reason we can do the d=3 case is that the d=2 case is very tractable, and we have lots of choices for the chain coverings, and can choose one which is well-suited to the move to d=3. Indeed, when I set this problem to some students, an explicit listing of a maximal chain covering was the approach some of them went for, and the construction wasn’t too horrible to state.

[Another factor is that it computationally feasible to calculate the size of the middle layer, which is much more annoying in d>3.]

[I’m redefining the grid here as  rather than

rather than  .]

.]

The case distinction between n even and n odd is going to make both the calculation and the argument annoying, so I’m only going to treat the even case, since n=8 was the original problem posed. I should be honest and confess that I haven’t checked the n odd case, but I assume it’s similar.

So when n is even, there are two middle layers namely  (corresponding to total score 10 and total score eleven in the original problem). I calculated the number of element in the

(corresponding to total score 10 and total score eleven in the original problem). I calculated the number of element in the  layer by splitting based on the value of the first coordinate. I found it helpful to decompose the resulting sum as

layer by splitting based on the value of the first coordinate. I found it helpful to decompose the resulting sum as

based on whether there is an upper bound, or a lower bound on the value taken by the second coordinate. This is not very interesting, and I obtained the answer  , and of course this is an integer, since n is even.

, and of course this is an integer, since n is even.

Now to show that any antichain has size at most  . Here we use our good control on the chain coverings in the case d=2. We note that there is a chain covering of the (n,d=2) grid where the chains have 2n-1, 2n-3,…, 3, 1 elements (%). We get this by starting with a maximal chain, then taking a maximal chain on what remains etc. It’s pretty much the first thing you’re likely to try.

. Here we use our good control on the chain coverings in the case d=2. We note that there is a chain covering of the (n,d=2) grid where the chains have 2n-1, 2n-3,…, 3, 1 elements (%). We get this by starting with a maximal chain, then taking a maximal chain on what remains etc. It’s pretty much the first thing you’re likely to try.

Consider an antichain with size A in the (n,d=3) grid, and project into the second and third coordinates. The image sets are distinct, because otherwise a non-trivial pre-image would be a chain. So we have A sets in the (n,d=2) grid. How many can be in each chain in the decomposition (%). Well, if there are more than n in any chain in (%), then two must have been mapped from elements of the (n,d=3) grid with the same first coordinate, and so satisfy a containment relation. So in fact there are at most n image points in any of the chains of (%). So we now have a bound of  . But of course, some of the chains in (%) have length less than n, so we are throwing away information. Indeed, the number of images points in a given chain is at most

. But of course, some of the chains in (%) have length less than n, so we are throwing away information. Indeed, the number of images points in a given chain is at most

and so the number of image points in total is bounded by

where there are n/2 copies of n in the first half of the sum. Evaluating this sum gives  , exactly as we wanted.

, exactly as we wanted.

References

[1] – Bollobas, Leader (1991) – Compressions and Isoperimetric Inequalities. Available open-access here.

or, alternatively,

. It’s not in general true that BE is equal to BP=EP. Unless you’re very unlucky, you can establish this from one diagram.

, hence

. This shows that AB is tangent to the circumcircle of BCE. But then the line L is a radius of this circle, and so its centre must be P, the unique point on L which is equidistant from B and C.

and

. But BPC is isosceles so

. In general, the converse of ‘angle at centre is twice angle at circumference’ does not hold, but when we know P is equidistant from B and C this does hold, and so the angle relations precisely confirm that P is the centre of the circle through BPE.

. If pursuing this second approach, one would need to be careful to account for whether P is on the same side or the opposite side of BC to E. That said, unless you do something very exotic, it should be exactly the same argument or calculation, and such a case distinction probably isn’t very important.

sub-boards, each with dimensions

. Continue reading